Blog

Unlocking the Power of Reasoning in LLMs with NVIDIA NeMo

In today’s fast-paced technological landscape, the demand for advanced language models that can perform reasoning tasks is on the rise. Large Language Models (LLMs) are at the forefront of this evolution. Among the tools available for training such models, NVIDIA’s NeMo stands out as a robust and user-friendly platform. This article will guide you through the process of training a reasoning-capable LLM in just one weekend using NVIDIA NeMo.

Understanding Large Language Models

Large Language Models (LLMs) leverage deep learning techniques to understand and generate human-like text. These models can be fine-tuned to perform a variety of tasks, including language translation, summarization, and, importantly, reasoning. Reasoning involves drawing conclusions or making inferences from given data, a task that traditional models often struggle with.

What Makes Reasoning Important?

Incorporating reasoning capabilities into LLMs enhances their performance on complex tasks. This not only improves the model’s accuracy but also enriches user interactions. Businesses and researchers are eager to harness these advanced capabilities for applications in customer support, content creation, and data analysis.

Introducing NVIDIA NeMo

NVIDIA NeMo is an open-source toolkit designed to facilitate the training and fine-tuning of state-of-the-art language models. It provides a framework that simplifies the workflow, allowing users to focus on model architecture and training strategies, making it ideal for both beginners and seasoned professionals.

Key Features of NeMo

- Modularity: NeMo’s modular nature allows users to choose and customize the components of their language models.

- Pre-trained Models: It offers access to a variety of pre-trained models, which can be fine-tuned for specific applications.

- GPU Acceleration: The toolkit is optimized for NVIDIA GPUs, ensuring efficient training processes.

Getting Started with NeMo

To kick off your journey in training a reasoning-capable LLM, follow these steps:

Step 1: Setting Up Your Environment

Before diving into model training, it’s essential to set up a conducive working environment. This includes:

- Hardware Requirements: Ensure you have access to an NVIDIA GPU with adequate memory for effective processing. A powerful GPU can significantly reduce training time.

- Software Installation: Install the necessary software packages, including PyTorch and NeMo. You can do this through pip:

bash

pip install nemo_toolkit[all]

Step 2: Select a Base Model

Choosing the right base model is crucial for achieving optimal results. NeMo provides several pre-trained models:

- GPT-style Models: Best for generative tasks.

- BERT-style Models: Suitable for tasks requiring understanding context.

Select a model that aligns with your reasoning goals. For instance, if you’re focused on text summarization, a BERT-style model may be beneficial.

Step 3: Fine-Tuning the Model

Fine-tuning is where the magic happens. Here’s how you can tailor the selected model:

-

Dataset Preparation: Gather a dataset that contains examples requiring reasoning. This could include datasets like the ARC (AI2 Reasoning Challenge) or others specific to your domain.

-

Configuration: Adjust the configuration files in NeMo to set the hyperparameters, including learning rate, training epochs, and batch size. This process allows you to customize the training procedure according to your dataset’s characteristics.

- Training: Initiate the training process using NeMo’s training scripts. Monitor the training closely, as adjustments may be needed based on the model’s performance.

Effective Evaluation Strategies

Once the model is trained, thorough evaluation is essential. Consider these evaluation strategies:

Metrics to Monitor

- Accuracy: Measure the correctness of the model’s predictions.

- F1 Score: This balances precision and recall, providing insights into the model’s overall performance.

Validation Datasets

Using a separate validation dataset ensures that the model’s reasoning capabilities can generalize beyond the training data. Test the model against known reasoning tasks to benchmark its effectiveness.

Fine-Tuning for Real-World Applications

To truly harness the potential of your reasoning-capable LLM, consider fine-tuning it further for specific tasks. This stage involves:

-

Identifying Use Cases: Pinpoint specific applications where reasoning can enhance the model’s functionality, such as healthcare data analysis or automated customer support.

- Additional Training: Fine-tune the model further with domain-specific data to improve its contextual understanding and reasoning capabilities.

Challenges You May Encounter

Training a reasoning-capable LLM is not without its challenges. Here are some common hurdles and tips to overcome them:

-

Data Quality: High-quality, relevant datasets are crucial. If the dataset is noisy, the model’s reasoning will suffer. Invest time in curating your data.

-

Overfitting: Watch out for overfitting during training. Implement strategies like dropout layers and early stopping to enhance the model’s generalization.

- Computational Resources: Make sure your hardware can handle the training load. If resources are limited, consider cloud-based solutions for scalable training.

The Future of LLMs with Reasoning Capabilities

As technology advances, the role of Large Language Models will continue to expand. The integration of reasoning capabilities will create opportunities for more intelligent applications across various sectors, from education to finance.

Conclusion

Training a reasoning-capable LLM in a weekend might seem ambitious, but with NVIDIA NeMo, it’s an achievable goal. By carefully setting up your environment, selecting the right model, and fine-tuning it appropriately, you can unlock the potential of advanced language processing. As you experiment and deploy these models, you will likely find new ways to leverage their capabilities, enriching both user experiences and business operations. Embrace the journey and contribute to the evolution of AI-driven reasoning.

Elementor Pro

In stock

PixelYourSite Pro

In stock

Rank Math Pro

In stock

Related posts

Building a WordPress Plugin | Jon learns to code with AI

How to add custom Javascript code to WordPress website

6 Best FREE WordPress Contact Form Plugins In 2025!

Solve Puzzles to Silence Alarms and Boost Alertness

Conheça AI do WordPress para construção de sites

WordPress vs Shopify: The Ultimate Comparison for Online Store Owners | Shopify Tutorial

Apple Ends iCloud Support for iOS 10, macOS Sierra on Sept 15, 2025

How to Speed up WordPress Website using AI 🔥(RapidLoad AI Plugin Review)

Bringing AI Agents Into Any UI: The AG-UI Protocol for Real-Time, Structured Agent–Frontend Streams

Web Hosting vs WordPress Web Hosting | The Difference May Break Your Site

Google Lays Off 200+ AI Contractors Amid Unionization Disputes

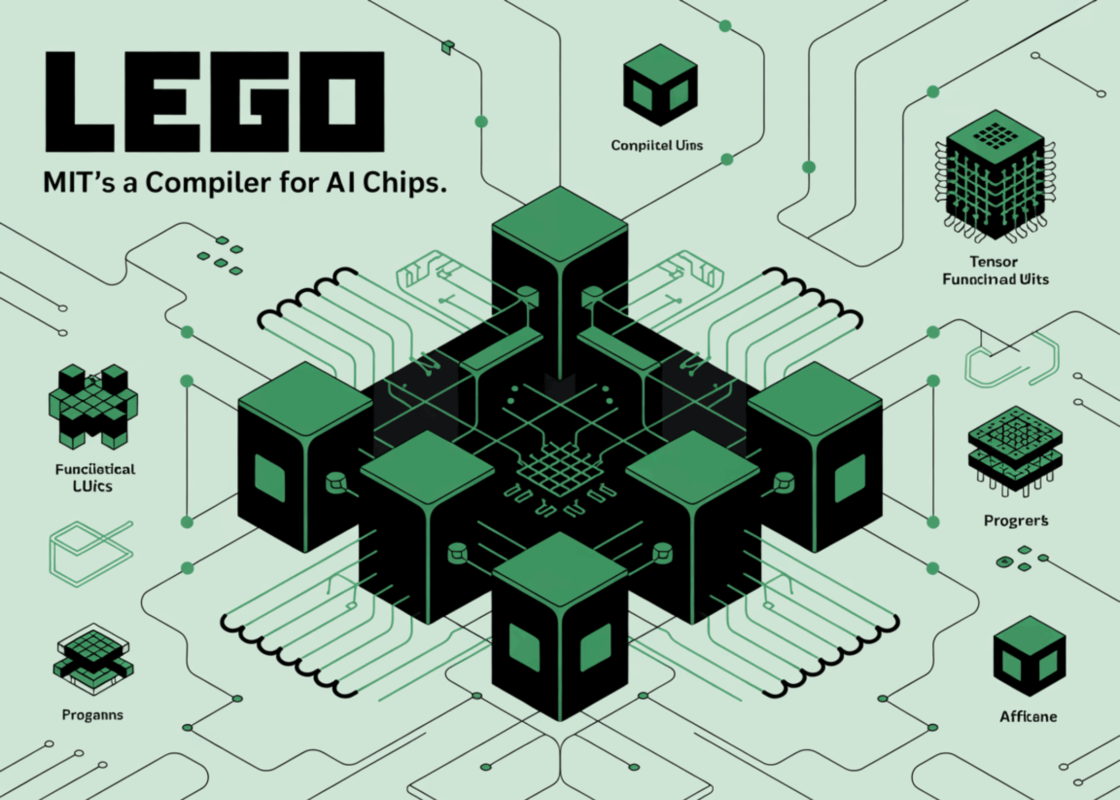

MIT’s LEGO: A Compiler for AI Chips that Auto-Generates Fast, Efficient Spatial Accelerators

Products

-

Rayzi : Live streaming, PK Battel, Multi Live, Voice Chat Room, Beauty Filter with Admin Panel

Rayzi : Live streaming, PK Battel, Multi Live, Voice Chat Room, Beauty Filter with Admin Panel

$98.40Original price was: $98.40.$34.44Current price is: $34.44.In stock

-

Team Showcase – WordPress Plugin

Team Showcase – WordPress Plugin

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

ChatBot for WooCommerce – Retargeting, Exit Intent, Abandoned Cart, Facebook Live Chat – WoowBot

ChatBot for WooCommerce – Retargeting, Exit Intent, Abandoned Cart, Facebook Live Chat – WoowBot

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

FOX – Currency Switcher Professional for WooCommerce

FOX – Currency Switcher Professional for WooCommerce

$41.00Original price was: $41.00.$4.02Current price is: $4.02.In stock

-

WooCommerce Attach Me!

WooCommerce Attach Me!

$41.00Original price was: $41.00.$4.02Current price is: $4.02.In stock

-

Magic Post Thumbnail Pro

Magic Post Thumbnail Pro

$53.71Original price was: $53.71.$3.69Current price is: $3.69.In stock

-

Bus Ticket Booking with Seat Reservation PRO

Bus Ticket Booking with Seat Reservation PRO

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

GiveWP + Addons

GiveWP + Addons

$53.71Original price was: $53.71.$3.85Current price is: $3.85.In stock

-

JetBlog – Blogging Package for Elementor Page Builder

JetBlog – Blogging Package for Elementor Page Builder

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

ACF Views Pro

ACF Views Pro

$62.73Original price was: $62.73.$3.94Current price is: $3.94.In stock

-

Kadence Theme Pro

Kadence Theme Pro

$53.71Original price was: $53.71.$3.69Current price is: $3.69.In stock

-

LoginPress Pro

LoginPress Pro

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

ElementsKit – Addons for Elementor

ElementsKit – Addons for Elementor

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

CartBounty Pro – Save and recover abandoned carts for WooCommerce

CartBounty Pro – Save and recover abandoned carts for WooCommerce

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Checkout Field Editor and Manager for WooCommerce Pro

Checkout Field Editor and Manager for WooCommerce Pro

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Social Auto Poster

Social Auto Poster

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Vitepos Pro

Vitepos Pro

$53.71Original price was: $53.71.$12.30Current price is: $12.30.In stock

-

Digits : WordPress Mobile Number Signup and Login

Digits : WordPress Mobile Number Signup and Login

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

JetEngine For Elementor

JetEngine For Elementor

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

BookingPress Pro – Appointment Booking plugin

BookingPress Pro – Appointment Booking plugin

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Polylang Pro

Polylang Pro

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

All-in-One WP Migration Unlimited Extension

All-in-One WP Migration Unlimited Extension

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Slider Revolution Responsive WordPress Plugin

Slider Revolution Responsive WordPress Plugin

$53.71Original price was: $53.71.$4.51Current price is: $4.51.In stock

-

Advanced Custom Fields (ACF) Pro

Advanced Custom Fields (ACF) Pro

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Gillion | Multi-Concept Blog/Magazine & Shop WordPress AMP Theme

Rated 4.60 out of 5

Gillion | Multi-Concept Blog/Magazine & Shop WordPress AMP Theme

Rated 4.60 out of 5$53.71Original price was: $53.71.$5.00Current price is: $5.00.In stock

-

Eidmart | Digital Marketplace WordPress Theme

Rated 4.70 out of 5

Eidmart | Digital Marketplace WordPress Theme

Rated 4.70 out of 5$53.71Original price was: $53.71.$5.00Current price is: $5.00.In stock

-

Phox - Hosting WordPress & WHMCS Theme

Rated 4.89 out of 5

Phox - Hosting WordPress & WHMCS Theme

Rated 4.89 out of 5$53.71Original price was: $53.71.$5.17Current price is: $5.17.In stock

-

Cuinare - Multivendor Restaurant WordPress Theme

Rated 4.14 out of 5

Cuinare - Multivendor Restaurant WordPress Theme

Rated 4.14 out of 5$53.71Original price was: $53.71.$5.17Current price is: $5.17.In stock

-

Eikra - Education WordPress Theme

Rated 4.60 out of 5

Eikra - Education WordPress Theme

Rated 4.60 out of 5$62.73Original price was: $62.73.$5.08Current price is: $5.08.In stock

-

Tripgo - Tour Booking WordPress Theme

Rated 5.00 out of 5

Tripgo - Tour Booking WordPress Theme

Rated 5.00 out of 5$53.71Original price was: $53.71.$4.76Current price is: $4.76.In stock