Blog

Scaling AI Inference Performance and Flexibility with NVIDIA NVLink and NVLink Fusion

Understanding AI Inference Performance

Artificial Intelligence (AI) is revolutionizing industries, and its effective deployment hinges on two primary factors: performance and flexibility. As organizations seek to implement AI solutions, scalability becomes crucial. This blog post explores how NVIDIA’s NVLink and NVLink Fusion contribute to enhancing AI inference capabilities.

What is AI Inference?

AI inference involves using a trained model to make predictions or decisions based on new input data. This process is vital in applications ranging from natural language processing to computer vision. The efficiency and speed of inference can significantly impact user experience and operational costs, making high-performance computing essential.

The Importance of Performance in AI

In AI systems, performance equates to the speed and accuracy with which models can process data. High-performance inference engines help organizations achieve faster responses and better outcomes. The performance metrics are often influenced by the hardware and architectures utilized, which is where NVIDIA’s technologies come into play.

Challenges in AI Inference

Organizations face various challenges when scaling their AI inference operations, including:

- Latency: Low latency is critical for real-time applications, where delays can diminish user satisfaction.

- Throughput: The system must handle large volumes of data without bottlenecks.

- Flexibility: Organizations often need to adapt their AI solutions to various workloads and applications, making adaptable architectures a necessity.

Introducing NVIDIA NVLink

NVIDIA NVLink is a high-speed interconnect technology that allows multiple GPUs to communicate efficiently. This connection enhances performance and enables GPUs to share memory, which is essential for complex AI models that require vast computational resources.

Key Features of NVLink

-

Increased Bandwidth: NVLink provides significantly higher bandwidth compared to traditional PCIe connections. This allows for faster data transfer rates between GPUs, leading to improved performance in AI inference tasks.

-

Shared Memory Access: With NVLink, multiple GPUs can work together more effectively by accessing the same memory space. This capability is vital for large-scale AI models, as it allows for efficient data sharing and reduces the need for data replication.

- Scalability: NVLink supports the integration of additional GPUs with minimal overhead. This scalability is particularly beneficial for organizations that need to expand their AI capabilities without overhauling their infrastructure.

NVLink Fusion: The Next Step

While NVLink enhances GPU communication, NVLink Fusion takes it a step further. This innovative technology allows for the merging of multiple GPUs into a single logical device, thereby simplifying the programming model and optimizing resource allocation.

Advantages of NVLink Fusion

-

Streamlined Workflows: By treating multiple GPUs as a single logical unit, NVLink Fusion reduces the complexity associated with parallel computing. This simplification enables developers to focus on model performance without worrying about the intricacies of resource distribution.

-

Optimized Resource Management: NVLink Fusion intelligently allocates workloads across GPUs, improving overall efficiency. This careful management can lead to higher throughput and reduced latency, making it ideal for demanding AI inference tasks.

- Enhanced Performance for Large Models: As AI models become increasingly complex, the ability to leverage multiple GPUs as a unified system through NVLink Fusion allows for better handling of vast datasets, resulting in quicker inference times.

The Role of Software in AI Inference

While hardware advancements are crucial, software optimization is equally important in maximizing AI inference performance. Tools and frameworks optimized for NVIDIA architectures can help organizations harness the full potential of NVLink and NVLink Fusion.

Key Software Solutions

-

CUDA: NVIDIA’s parallel computing platform, CUDA, allows developers to leverage GPU power effectively. Using CUDA in conjunction with NVLink can lead to significant performance gains in inference tasks.

-

TensorRT: This deep learning inference optimizer is specifically designed to maximize performance on NVIDIA GPUs. TensorRT can greatly enhance the throughput and reduce latency for AI models deployed in production.

- Framework Compatibility: Many popular machine learning frameworks, such as TensorFlow and PyTorch, have been optimized for NVIDIA GPUs. Utilizing these frameworks can streamline the deployment of AI applications while ensuring that underlying hardware gains are fully leveraged.

Future Trends in AI Inference

As AI technology continues to evolve, several trends are emerging that may shape the future of AI inference:

-

Continued Hardware Improvements: Future iterations of GPUs and interconnect technologies like NVLink are likely to deliver even higher performance levels, allowing for more complex models to be utilized effectively.

-

AI Edge Computing: As the demand for real-time applications grows, edge computing will facilitate AI processing closer to data sources, reducing latency and enhancing user experiences.

- Integration of AI and IoT: The convergence of AI with the Internet of Things (IoT) will necessitate robust inference solutions. Technologies like NVLink and NVLink Fusion can accommodate the growing number of devices that generate and require processing of massive datasets.

Conclusion

NVIDIA NVLink and NVLink Fusion are pivotal in elevating AI inference performance and flexibility. By overcoming traditional challenges associated with latency, throughput, and scalability, these technologies enable organizations to deploy powerful AI solutions across various applications.

As the landscape of AI continues to develop, leveraging advanced hardware in conjunction with optimized software will be crucial for staying competitive. Organizations ready to embrace these technologies are likely to see significant advantages in their AI capabilities, leading to enhanced performance, improved user experiences, and ultimately, greater business success.

Harnessing the power of NVLink and NVLink Fusion places organizations in a prime position to navigate the complexities of AI and achieve their goals with confidence.

Elementor Pro

In stock

PixelYourSite Pro

In stock

Rank Math Pro

In stock

Related posts

Building a WordPress Plugin | Jon learns to code with AI

How to add custom Javascript code to WordPress website

6 Best FREE WordPress Contact Form Plugins In 2025!

Solve Puzzles to Silence Alarms and Boost Alertness

Conheça AI do WordPress para construção de sites

WordPress vs Shopify: The Ultimate Comparison for Online Store Owners | Shopify Tutorial

Apple Ends iCloud Support for iOS 10, macOS Sierra on Sept 15, 2025

How to Speed up WordPress Website using AI 🔥(RapidLoad AI Plugin Review)

Bringing AI Agents Into Any UI: The AG-UI Protocol for Real-Time, Structured Agent–Frontend Streams

Web Hosting vs WordPress Web Hosting | The Difference May Break Your Site

Google Lays Off 200+ AI Contractors Amid Unionization Disputes

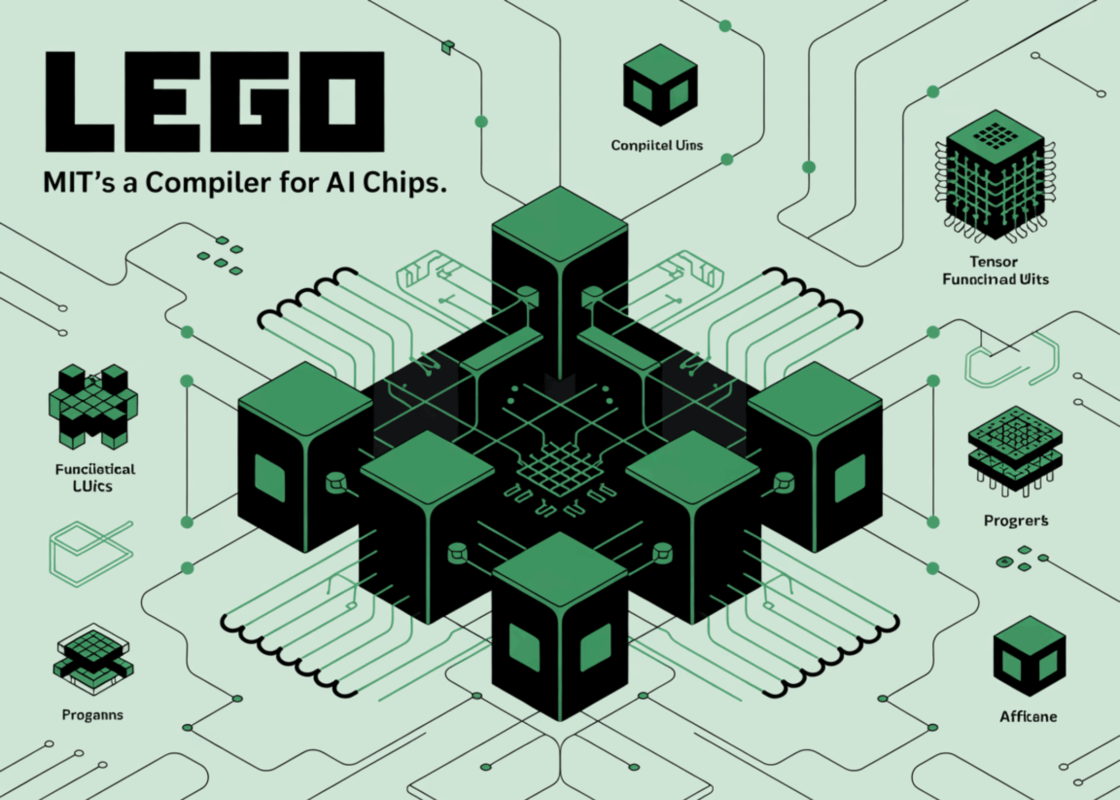

MIT’s LEGO: A Compiler for AI Chips that Auto-Generates Fast, Efficient Spatial Accelerators

Products

-

Rayzi : Live streaming, PK Battel, Multi Live, Voice Chat Room, Beauty Filter with Admin Panel

Rayzi : Live streaming, PK Battel, Multi Live, Voice Chat Room, Beauty Filter with Admin Panel

$98.40Original price was: $98.40.$34.44Current price is: $34.44.In stock

-

Team Showcase – WordPress Plugin

Team Showcase – WordPress Plugin

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

ChatBot for WooCommerce – Retargeting, Exit Intent, Abandoned Cart, Facebook Live Chat – WoowBot

ChatBot for WooCommerce – Retargeting, Exit Intent, Abandoned Cart, Facebook Live Chat – WoowBot

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

FOX – Currency Switcher Professional for WooCommerce

FOX – Currency Switcher Professional for WooCommerce

$41.00Original price was: $41.00.$4.02Current price is: $4.02.In stock

-

WooCommerce Attach Me!

WooCommerce Attach Me!

$41.00Original price was: $41.00.$4.02Current price is: $4.02.In stock

-

Magic Post Thumbnail Pro

Magic Post Thumbnail Pro

$53.71Original price was: $53.71.$3.69Current price is: $3.69.In stock

-

Bus Ticket Booking with Seat Reservation PRO

Bus Ticket Booking with Seat Reservation PRO

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

GiveWP + Addons

GiveWP + Addons

$53.71Original price was: $53.71.$3.85Current price is: $3.85.In stock

-

JetBlog – Blogging Package for Elementor Page Builder

JetBlog – Blogging Package for Elementor Page Builder

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

ACF Views Pro

ACF Views Pro

$62.73Original price was: $62.73.$3.94Current price is: $3.94.In stock

-

Kadence Theme Pro

Kadence Theme Pro

$53.71Original price was: $53.71.$3.69Current price is: $3.69.In stock

-

LoginPress Pro

LoginPress Pro

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

ElementsKit – Addons for Elementor

ElementsKit – Addons for Elementor

$53.71Original price was: $53.71.$4.02Current price is: $4.02.In stock

-

CartBounty Pro – Save and recover abandoned carts for WooCommerce

CartBounty Pro – Save and recover abandoned carts for WooCommerce

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Checkout Field Editor and Manager for WooCommerce Pro

Checkout Field Editor and Manager for WooCommerce Pro

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Social Auto Poster

Social Auto Poster

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Vitepos Pro

Vitepos Pro

$53.71Original price was: $53.71.$12.30Current price is: $12.30.In stock

-

Digits : WordPress Mobile Number Signup and Login

Digits : WordPress Mobile Number Signup and Login

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

JetEngine For Elementor

JetEngine For Elementor

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

BookingPress Pro – Appointment Booking plugin

BookingPress Pro – Appointment Booking plugin

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Polylang Pro

Polylang Pro

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

All-in-One WP Migration Unlimited Extension

All-in-One WP Migration Unlimited Extension

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Slider Revolution Responsive WordPress Plugin

Slider Revolution Responsive WordPress Plugin

$53.71Original price was: $53.71.$4.51Current price is: $4.51.In stock

-

Advanced Custom Fields (ACF) Pro

Advanced Custom Fields (ACF) Pro

$53.71Original price was: $53.71.$3.94Current price is: $3.94.In stock

-

Gillion | Multi-Concept Blog/Magazine & Shop WordPress AMP Theme

Rated 4.60 out of 5

Gillion | Multi-Concept Blog/Magazine & Shop WordPress AMP Theme

Rated 4.60 out of 5$53.71Original price was: $53.71.$5.00Current price is: $5.00.In stock

-

Eidmart | Digital Marketplace WordPress Theme

Rated 4.70 out of 5

Eidmart | Digital Marketplace WordPress Theme

Rated 4.70 out of 5$53.71Original price was: $53.71.$5.00Current price is: $5.00.In stock

-

Phox - Hosting WordPress & WHMCS Theme

Rated 4.89 out of 5

Phox - Hosting WordPress & WHMCS Theme

Rated 4.89 out of 5$53.71Original price was: $53.71.$5.17Current price is: $5.17.In stock

-

Cuinare - Multivendor Restaurant WordPress Theme

Rated 4.14 out of 5

Cuinare - Multivendor Restaurant WordPress Theme

Rated 4.14 out of 5$53.71Original price was: $53.71.$5.17Current price is: $5.17.In stock

-

Eikra - Education WordPress Theme

Rated 4.60 out of 5

Eikra - Education WordPress Theme

Rated 4.60 out of 5$62.73Original price was: $62.73.$5.08Current price is: $5.08.In stock

-

Tripgo - Tour Booking WordPress Theme

Rated 5.00 out of 5

Tripgo - Tour Booking WordPress Theme

Rated 5.00 out of 5$53.71Original price was: $53.71.$4.76Current price is: $4.76.In stock