Blog

How to Spot (and Fix) 5 Common Performance Bottlenecks in pandas Workflows

Identifying and Resolving Common Performance Bottlenecks in Pandas Workflows

Pandas is an invaluable tool for data analysis and manipulation in Python. However, as datasets grow in size and complexity, you may start to encounter performance bottlenecks in your workflows. In this guide, we’ll explore five common performance issues you may face when using Pandas and how to address them effectively.

Understanding Performance Bottlenecks in Pandas

Performance bottlenecks refer to specific parts of a process that slow down the overall execution time. These issues can arise from various factors, such as inefficient code practices, the size of the datasets, or inadequate hardware resources. Identifying these bottlenecks early on is essential for enhancing the efficiency of your data workflows.

1. Inefficient Data Loading

The Problem

Data loading can be a significant performance hurdle, especially with large datasets. If you’re using functions like read_csv(), loading time can drastically increase if you don’t optimize the read process.

The Solution

Several techniques can help streamline data loading:

-

Use Specific Data Types: By specifying data types during the loading process, you can significantly reduce memory usage. For instance, using

float32instead offloat64orcategoryfor categorical data can speed things up. -

Load Only Necessary Columns: If you’re only interested in specific columns, utilize the

usecolsparameter to load only what you need. - Chunking: For extremely large files, consider reading the data in chunks using the

chunksizeparameter. This method allows you to process the file in manageable segments without overwhelming your memory resources.

2. Unoptimized Data Filtering

The Problem

Filtering datasets can become slow, especially with large DataFrames and complex conditions.

The Solution

To enhance filtering performance, consider the following strategies:

-

Boolean Indexing: Use boolean masks to filter DataFrames instead of iterating through rows. This method is more efficient and can reduce execution time.

-

Utilize

.query(): Pandas provides a.query()method that allows for more readable and potentially faster filtering. This method is especially useful for more complex conditions. - Set Indexes: If you frequently filter based on certain columns, setting an index can speed up repeated queries. Use the

set_index()method to designate a column as the index, enhancing lookup speed.

3. Slow Data Aggregation

The Problem

Data aggregation operations, such as group by or pivot tables, can turn laborious if executed without proper optimization.

The Solution

Consider these optimization techniques for faster aggregations:

-

Use Built-in Aggregation Functions: Instead of applying custom functions with

.apply(), try to use built-in functions like.sum(),.mean(), etc., as they are optimized for performance. -

GroupBy Efficiency: When using

groupby(), ensure that you’re only aggregating necessary columns. This practice minimizes the computation required during aggregation. - Cython and Numba: If you require custom aggregation functions, consider using Cython or Numba to compile Python code into machine code, dramatically increasing performance compared to pure Python functions.

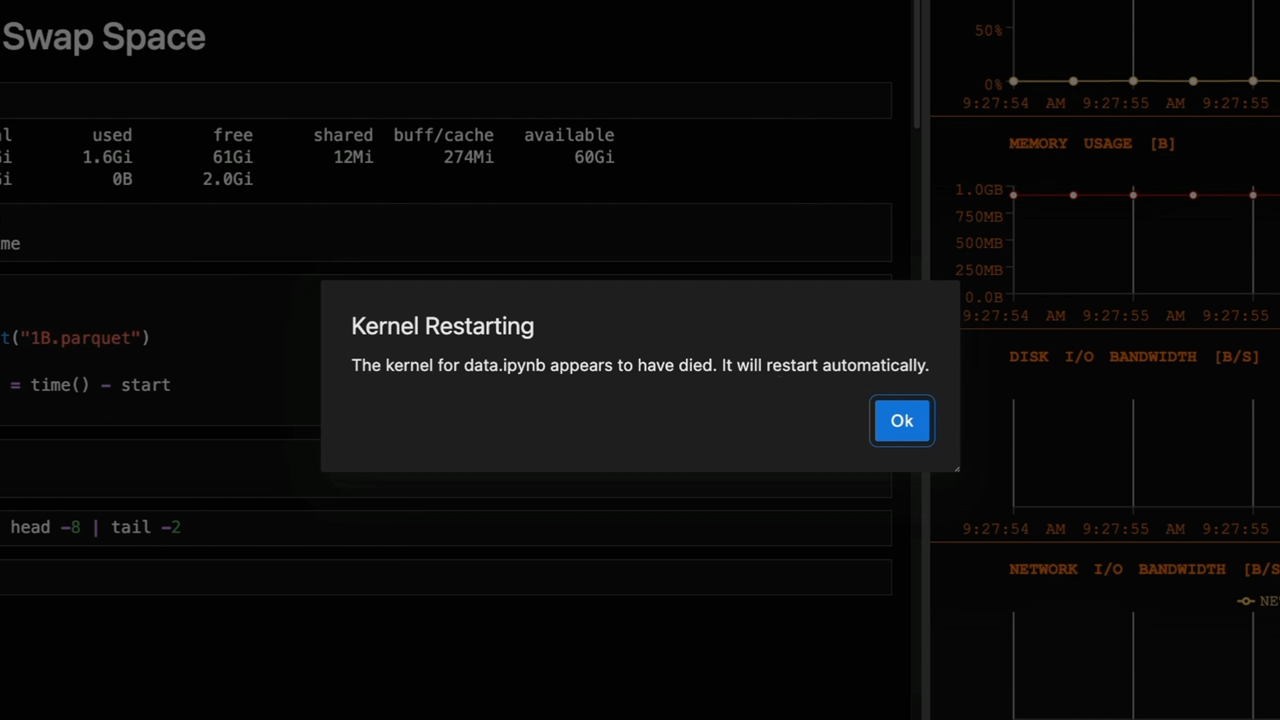

4. Memory Inefficiency

The Problem

Pandas DataFrames can consume significant memory, especially with large datasets, leading to slow performance and even crashes.

The Solution

To alleviate memory stress, you can implement these strategies:

-

Downcasting Numeric Types: Utilize Pandas’

pd.to_numeric()with thedowncastoption to convert numeric data to the smallest type possible, which can free up substantial memory. -

Removing Unused Data: If there are columns that aren’t required for analysis, drop them using the

drop()method. This simple step can reduce memory usage. - Garbage Collection: Regularly invoke Python’s garbage collection, particularly after removing large DataFrames, by using the

gc.collect()command. This action can help reclaim memory.

5. Inefficient Merges and Joins

The Problem

Combining multiple DataFrames through merges or joins can lead to slowdowns, particularly if the DataFrames are large.

The Solution

Here are methods to optimize your merge and join operations:

-

Indexing: Similar to filtering, setting an index on the columns you’re merging on can speed up the merge process. This optimization helps Pandas efficiently align rows.

-

Using

merge()with Suffixes: Prevent ambiguity by using thesuffixesargument, which can facilitate clarity and potentially speed up the merging process. - Choosing the Right Merge Type: Depending on the task, evaluate whether you really need an outer join. Inner joins can often be faster and what’s needed for analysis.

Monitoring and Profiling Your Workflows

To efficiently resolve performance bottlenecks, it’s also crucial to monitor your workflows and measure their execution time. Use Python’s built-in libraries, such as time and timeit, to track function execution and identify slow segments.

In addition, consider using profiling tools such as cProfile or line_profiler to assess which parts of your code are consuming the most time and resources. Profiling provides a clearer picture of where to focus your optimization efforts.

Conclusion

By identifying and fixing these common performance bottlenecks in your Pandas workflows, you can significantly improve speed and efficiency. Focusing on data loading, filtering, aggregation, memory management, and merging strategies will allow your data operations to run smoothly and efficiently. Armed with these techniques, you’ll be better prepared to handle even the most complex data challenges. Embrace these optimizations, and watch your Pandas workflows transform into a more effective tool for analysis.